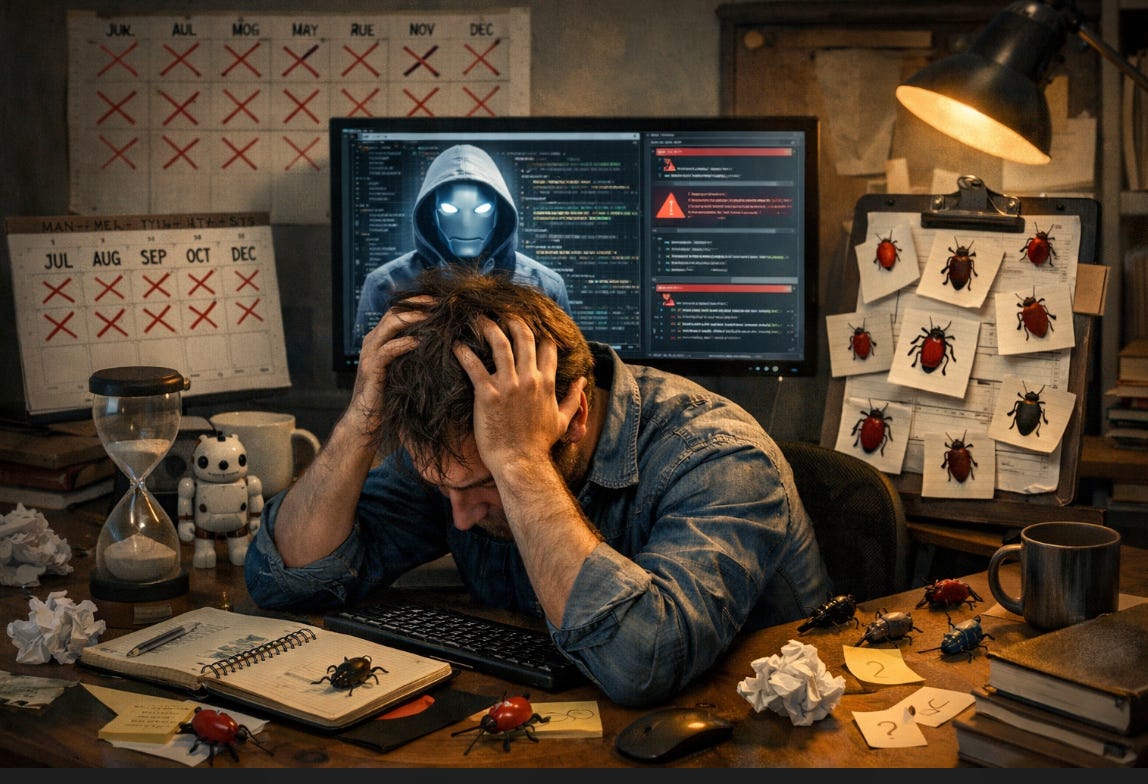

I Trusted AI Code for 6 Months. It Created 47 Subtle Bugs.

ChatGPT's code worked. Then production load hit 10K users.

Month 1: “This AI coding is amazing.”

Month 3: “Wait, why is this endpoint returning null sometimes?”

Month 6: “We’ve been shipping broken code for six months.”

Stack Overflow’s 2025 survey says 66% of developers deal with “AI solutions that are almost right, but not quite.”

I’m one of them.

Here’s what six months of trusting AI code cost us.